Comparisons of Disksuite vs Volume Manager

Characteristic | Solstice Disksuite | Veritas Volume Manager |

Availability | Free with a server license, pay for workstation. Sun seems to have an on again off again relationship about whether future development will continue to occur with this product, but it is currently available for Solaris8 and will continue to be supported in that capacity. The current word is that future development is on again. Salt liberally. (Just to further confuse things, SDS is free for Solaris 8 up to 8 CPUs, even for commercial). | Available from Sun or directly from Veritas (pricing may differ considerably. Also execellent educational pricing. Free with storage array or A5000 (but cannot use striping outside array device) |

Installation | relatively easy. You must do special things to first achieve rootdisk, swap, and other disk mirroring in exactly the right order. | easy. Follow the onscreen prompts, let it do its reboots. |

Upgrading | easy, remove patches, remove packages, add new packages | slightly more complex, but well documented. There are several ways to do it. |

Replacing failed | very easy. replace disk and resynchronize | very easy. replace disk and resynchronize |

Replacing failed | relatively easy. boot off mirror, replace disk, resync, boot off primary. | easy to slightly complex depending on setup. Well documented. 11 steps or fewer. |

Replacing failed | trivial | trivial |

extensibility / number | Traditionally, relatively easy but EXTREMELY limited by usage of hard partition table on disk. Number of total volumes on a typical system is very limited because of this. If you have a lot of disks, you can still create a lot of metadevices. The default is 256 max, but this can be increased by setting nmd=XXXX in /kernel/drv/md.conf and then rebooting. Schemes for managing metadevice naming for large number of deices are available, but clunky and occassionally contrived. NOTE: SDS 4.2.1+ (avail Sol7) removes the reliance upon disk VTOC for making metadevices through 'soft partitions'. | trivial. No limitations will be encountered by most people. Number of volumes is potentially limitless. |

Moving a volume | difficult unless special planning and precautions have been taken with laying out the proper partition and disk labels beforehand. Somewhat hidden by GUI. | trivial. on redundant volumes can be done on the fly. |

Growing a volume | volume can be extended in two different ways. It can be concatenated with another region of space someplace else or, if there is contiguous space following ALL of the partitions of the original volume, the stripe can be extended. Using concatenation you could grow a 4 disk stripe by 2 additional disks. (e.g. 4 disk stripe concatenated with a 2 disk stripe). | volume can be extended in two different ways. The columes of the stripe can be extended for Raid0/5, simple single-disk volumes can be grown directly, and in VxVM > 3.0, a volume can be re-layed out (The number of columns in a RAID-5 stripe can be reconfigured on the fly!). Contiguous space is not required. In VXVM < 3.0 if you are increasing the size of a stripe, you must have space on disks where is the original number of disks in a stripe. You can't 'grow' (but could relayout) a 4 disk stripe by adding two more disks, but you could with 4. Extremely flexible. |

Shrinking a volume | difficult. You must adjust all disk or soft partitions manually. | trivial. vxresize can shrink filesystem and volume in one command.. |

Relayout volume | Requires dump/restore of data. | Available on the fly for VxVM > 3.0 |

Logging | in SDS a Meta-trans device may be used which provides a log based addition on top of a UFS filesystem. This transaction log, if used, should be mirrorred! (Loss of log results in a filesystem that may be corrupted even beyond fsck repair.) Use of a UFS+ logging filesystem instead of a trans device is a better alternative. UFS+ logging is availabe in Sol7 and above. | VxVM has RAID-5 logs and mirror/drl logs, Logging, if used need not be mirrored, and volume can continue operating if log fails. Having 1 is highly recommended for crash recovery. Logs are infinitessimally small, typically 1 disk cylinder or so. The SDS logs are really more equivalent to a VxFS log at the filesystem level, but it is worth mentioning the additional capabilities of VxVM in this regard. UFS+ with logging can also be used on a VxVM volume. There are many kinds of purpose-specific logs for things like fast mirror resync, volume replication, database logging, etc. |

Performance | Your mileage may vary. SDS seems to excel at simple RAID-0 striping, but seems to be only marginally faster than VxVM. VxVM also seems to gain back when using large interleaves. For best results, benchmark YOUR data with YOUR app and pay very close attention to your data size and your stripe unit/interleave size. RAID5 on VxVM is almost always faster by 20-30%. | |

Notifications (see also) | SNMP traps are used for notification. You must have something set to receive them. Notifications are limited in scope. | VxVM uses email for notifying you when a volume is being moved because of bad blocks using hot relocation or sparing. The notification is very good. |

hot spare disks may be designated for a diskset, but must be done at the slice level. | hot spare disks may be designated for a diskgroup. Or, extra space on any disk can be used for dynamic hot relocation without the need for reserving a spare. | |

Terminology | SDS diskset = VxVM diskgroup, SDS metadevice = VxVM volume, SDS Trans device ~ VxVM log, VxVM has subdisks which are units of data (e.g. a column of a stripe) that have no SDS equivalent. VxVM plexes are groupings of subdisks (e.g. into a stripe) that have no real SDS equivalent. VxVM Volumes are groupings of plexes. (e.g. the data plex and a log plex, or 2 plexes for a 0+1 volume) | |

GUI | Most people prefer the VxVM GUI, though there are a few that prefer the (now 4 years old) SDS gui. SDS has been renamed SVM in Solaris9 and the GUI is supposedly much improved. VxVM has gone through 3-4 GUI incarnations. Disclaimer: I *never* use the GUI | |

command line usage | metareplace, metaclear to delete, metainit for volumes, metadb for state databases, etc | vxassist is used for creation of all volume types, vxsd, vxvol, vxplex operate on appropriate VxVM objects (see terminology above). Generally, there are many more vx specific commands, but normal usage rarely requires 20% of these except for advanced configurations (special initializations, using alternate pathing, etc) |

device database configuration copies | Kept in special, replicated, partitions you must setup on disk and configure via metdb. /etc/opt/SUNWmd, and /etc/system contain the boot/metadb information and description of the volumes. Lose these and you have big problems. NOTE: in Solaris9 SVM, configuration copies are now kept on the metadisks themselves with the data, like VxVM | Kept in the private region on each disk. Disks can move about and the machine can be reinstalled without having to worry about losing data in volumes. |

Typical usage | Simple mirroring of root disk, simple striping of disks where situation is relatively stagnant (e.g. just a bunch of disks with RAID0 and no immediate scaling or mobility concerns). Scales well in size of small number of volumes, but poorly in large number of smaller volumes. | enterprise ready. Data mobility, scalability, and configuration are all extensively addressed. Replacing failed encapsulated rootdisk is more complicated than it needs to be. See Sun best practices paper for a better way. Other alternatives exist. |

Best features | Simple/simplistic - root/swap mirroring and simple striping is no brainer, free or nearly so. Easier to fix by hand (without immediate support) when something goes fubar (vxvm is much more complex to understand under the hood). | extensible, error notifications are good, extremeley configurable, relayout on the fly with VxVM > 3.0, nice integration with VxFS, best scalability. Excellent edu pricing. |

Worst features | configuration syntax (meta*), configuration information stored on host system (< Sol9). Metadb/slices -- a remnant from SunOS4 days! -- needs to be completely redone; naming is inflexible and limited. Number of hard metadevices has kernel hack workarounds, but is still very limiting. Required mirroring of trans logs is inconvenient, but mitigated by using native UFS+ w/logging in Solaris7 and above. Lack of drive level hotsparing (see sparing) is extremely inconvenient. | expensive for enterprises and big servers, root mirroring and primary rootdisk failure for encapsulated rootdisk is too complex (but well documented) (should be fixed in VxVM 4.0), somewhat steep learning curve for advanced usage. Recovery from administrative SNAFUs (involving restore and single user mode) on a mirrored rootdisk can be troublesome. |

Tips | keep backups of your of configuration in case of corruption. Regular usage of metastat, metastat -p, and prtvtoc can help. | In VxVM regular usage of vxprint -ht is useful for disaster recovery. There are also several different disaster recovery scripts here |

Using VxVM for | Many people do this. There are tradeoffs. One the one hand you have added simplicity in the management of your rootdisks by not having to deal with VxVM encapsulation, which can ease recovery and upgrades. On the other hand, you now have the added complexity of having to maintain a separate rootdg volume someplace else, or use a simple slice (which, by the way, neither Sun nor Veritas will support if there are problems). You also have the added complexity of managing too completely separate storage/volume management products and their associated nuances and patches. In the end it boils down to preference. There is no right or wrong answer here, though some will say otherwise. ;) Veritas VxVM 4.0 removes the requirement for rootdg. | |

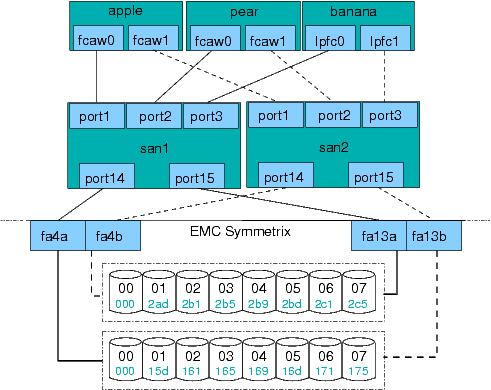

Storage area networking

Storage area networking